Indoor fern survival baseline

A practical baseline for keeping indoor ferns alive by managing moisture consistency, humidity, and container size instead of relying on rituals.

The over-engineered plant authority

eFerns treats houseplants, lawn replacements, and shade beds with the extreme seriousness usually reserved for quarterly operations dashboards. Underneath the tone, the information architecture stays rigid, structured, and reusable.

These numbers are intentionally editorial rather than fictional SaaS telemetry. They encode constraints that shape the implementation and the content model.

Guides are written in MDX, but anything that should show up in a card, comparison, or related-content block lives in structured frontmatter.

A practical baseline for keeping indoor ferns alive by managing moisture consistency, humidity, and container size instead of relying on rituals.

A measured workflow for replacing weak turf in filtered light with plants that tolerate root competition, moisture shifts, and low-input maintenance.

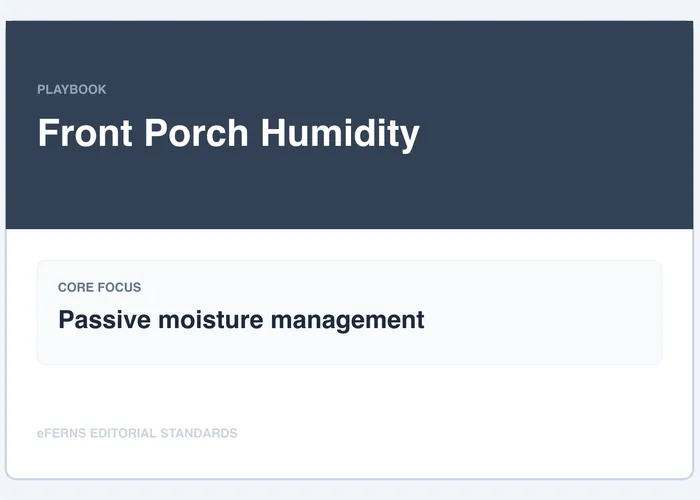

How to keep porch containers functional by focusing on placement, grouping, and irrigation consistency before adding gadget-heavy humidity systems.

Each entry uses the same light, water, soil, and maintenance vocabulary so editors can compare without inventing a new language on every page.

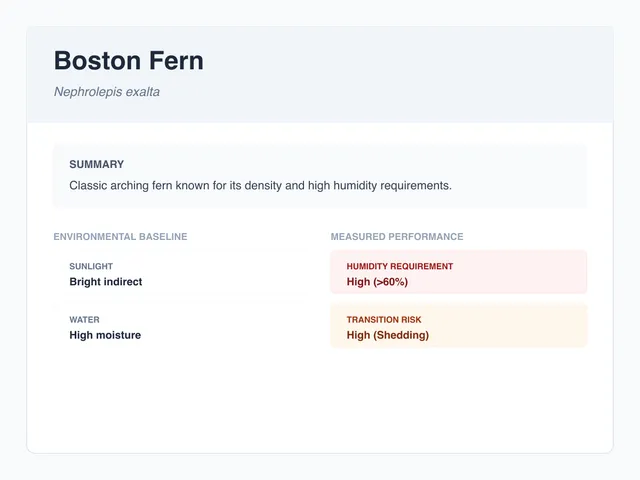

Nephrolepis exaltata

Classic indoor fern with dense fronds, high humidity demand, and a strong preference for steady root moisture.

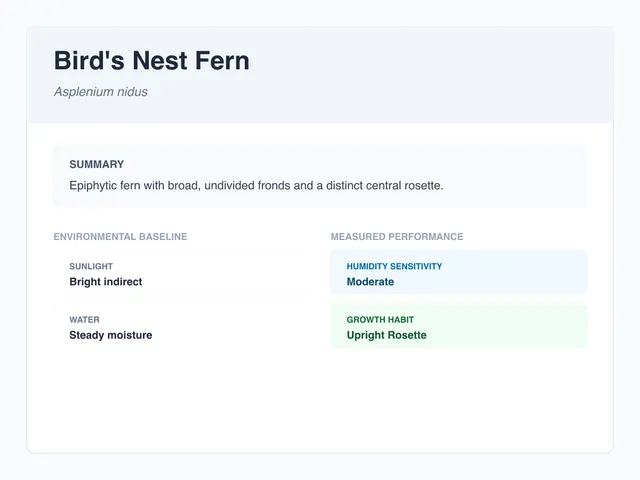

Asplenium nidus

Upright rosette fern that handles indoor life better than many frilled species, provided the crown stays dry.

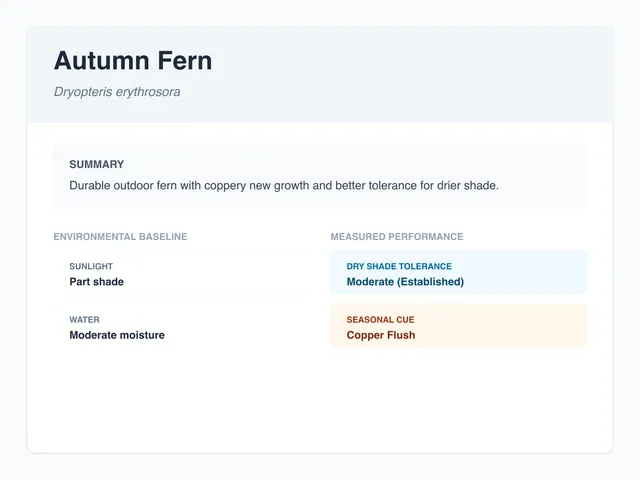

Dryopteris erythrosora

Durable outdoor fern with coppery new growth and better tolerance for drier shade than many woodland standbys.

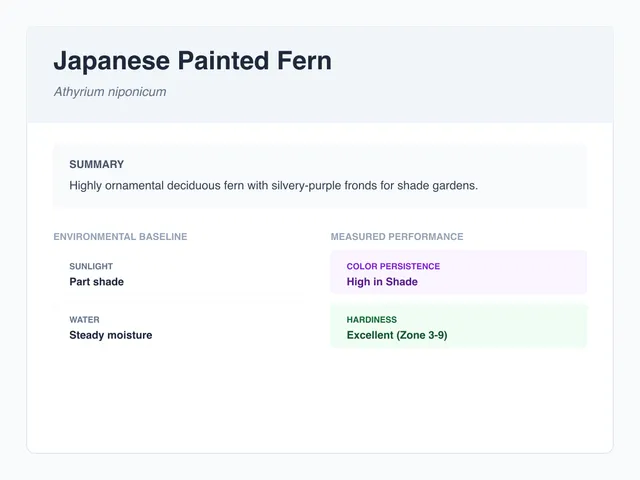

Athyrium niponicum

Deciduous fern valued for silver-gray fronds and precise shade-bed contrast rather than raw bulk.

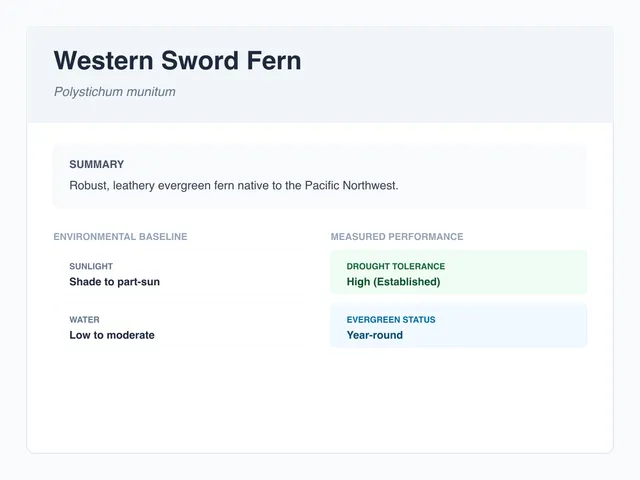

Polystichum munitum

Robust evergreen fern for woodland-style plantings with enough scale to replace weak turf-adjacent shade fillers.

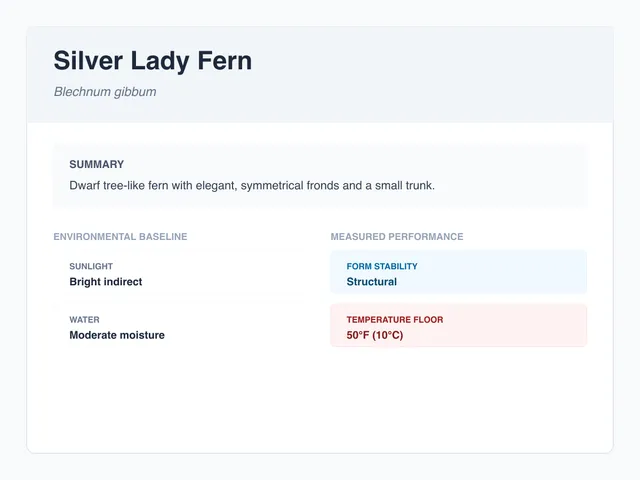

Blechnum gibbum

Compact tropical fern with a tidy habit that reads cleaner than Boston fern in smaller interior spaces.

The tone is slightly absurd because much of the content market for this category is vague on purpose. The implementation is the opposite.

Mist every fern daily and humidity problems disappear.

Root-zone moisture plus ambient humidity explain more variance than leaf spray.

Replace ritual with the variables that actually changed the outcome.

Shade mixes are interchangeable as long as the bag says “woodland.”

Texture, drainage speed, and organic matter ratios change survival rates fast.

Soil should be documented like a spec, not a mood.

Turf replacement is mostly a design decision.

Hours of light, moisture stability, and maintenance tolerance eliminate bad candidates early.

A site audit saves more time than another inspiration board.

This one tiny interactive surface earns its keep. Checklist state is scoped to the page slug and task id, written to localStorage, and restored on reload.

The most commercial version of this site would hide failed interventions. The better reference implementation leaves them in plain view.

No durable improvement. Leaf surfaces looked better briefly after spraying, but edge crisping and decline pattern stayed functionally unchanged.

Intervention: Misted foliage once every morning for 6 weeks while keeping the same pot, room, and baseline watering schedule.

Read the full postmortemBoth. Publicly it is a rigorous editorial property. Underneath, it is also a reference implementation for structured, editor-safe Astro builds.

The core navigation still works with no JavaScript. Structured data should improve authoring and reuse before it adds runtime complexity.

Failed interventions are often the clearest way to explain what variable actually mattered.

Browse the collections, inspect the methodology, or open the component docs and treat the project like a starter for a real client build.